|

1/16/2024 0 Comments Torch nn sequential get layers

I will post an accompanying Colab notebook. If you’d like to follow along with code, post in the comments below. In this post, I will attempt to walk you through this process as best as I can.įor the sake of an example, let’s use a pre-trained resnet18 model but the same techniques hold true for all models - pre-trained, custom or standard models. I am still amazed at the lack of clear documentation from PyTorch on this super important issue. However, it has been surprisingly hard to find out how to extract intermediate activations from the layers of a model cleanly (useful for visualizations, debugging the model as well as for use in other algorithms). Keywords: forward-hook, activations, intermediate layers, pre-trainedĪs a researcher actively developing deep learning models, I have come to prefer PyTorch for its ease of usage, stemming primarily from its similarity to Python, especially Numpy.

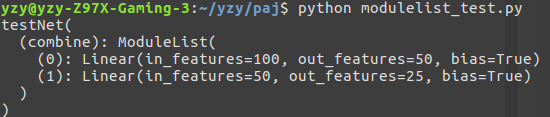

Accessing a particular layer from the model.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed